New technologies and armament: rethinking arms control

New technologies give rise to previously unconsidered scenarios in arms control. In this ninth episode of the Clingendael Spectator series on arms control, Sibylle Bauer

Artificial Intelligence, nanotechnology, quantum computing… The buzz around technological developments and their impact on the use of force is very visible in the media, the policy world, and the focus of think tanks.

Today, numerous arms control regimes are being abolished, contributing to the partial collapse of the global arms control architecture. This can be attributed to ongoing Great Power competition, regardless of these new technology developments. Still, technology does have a major impact on the effectiveness of arms control instruments. A clear example can be found in the developments in machine learning and robotics.

The race for speed may create unacceptable risks of nuclear accident and inadvertent escalation

The current race of major powers to build hypersonic weapons (for which currently no arms control instrument exists) is further compressing the time window for deciding on a response in real time. The consequent shift away from contextual decision-making removes or reduces the opportunity to moderate or avoid the use of force, and to guard against the effects of an attack. The result is dramatic, since this race for speed may create unacceptable risks of nuclear accident and inadvertent escalation.

The fact that technology affects the way states prepare for war and conduct war, raises two sets of questions for efforts to control the means of warfare. First, what is the nature of this impact? And second, what does this mean for arms control? Do rules, mechanisms and institutions need to be adjusted, new ones invented, or both?

New, emerging, emerged?

In current policy debates, a number of different labels are used to categorise technologies based on their novelty, such as ‘new’, ‘emerging’ or ‘newly emerged’. However, technology by its very nature is evolving and emerging. Moreover, from an arms control perspective, the focus is on the emergence of security and military applications of technologies, rather than the emergence of technology as such.

Artificial Intelligence (AI), for example, originated in the 1950s and has thus been an emerging technology since then. The debate about AI applications to enhance the autonomy of weapon systems or to support military decision-making is rather recent, because developments in computer speed and processing capacity have enabled major advances in the technology and its applications.

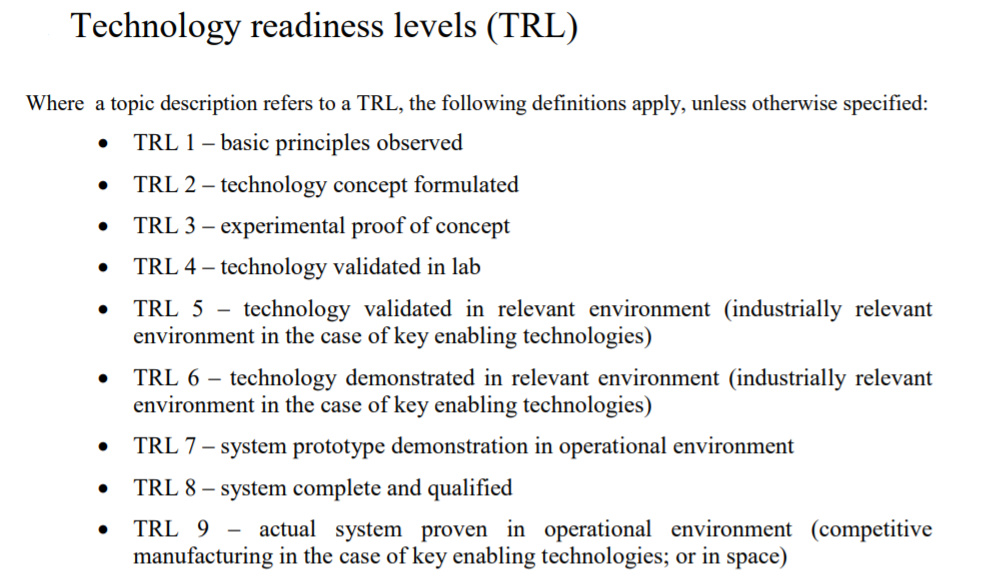

In this context, the Technology Readiness Levels (TRLs) and their spectrum of 1 to 9 to assess maturity is useful, as they give an indication of how far along a technology has emerged from basic to applied research. While TRLs are routinely referred to in the natural sciences, they are rarely referred to in the arms control community.

Characteristics and consequences of technology developments

What are the characteristics and consequences of technology developments that need to be understood in order to define and implement appropriate governance responses in the form of arms control (narrowly or broadly defined)?

First, technological developments have reinforced a blurring of lines between familiar and comfortable dichotomies such as offensive–defensive, conventional–nuclear, war–peace, civilian–military and internal–external. These word pairs have shaped our thinking about risks and threats, and consequently our responses.

However, much of the current reality takes the form of various shades of grey rather than black and white. Cyberattacks being one obvious example.

Cyber capabilities are being employed in situations that are not existing armed conflicts, raising questions about the applicable legal framework, as well as whether a cyber 'attack' can trigger the beginning of an armed conflict. The coining of the term of 'nuclear entanglement', for example, as a result of systems capable of carrying nuclear or conventional warheads, also illustrates this trend.

While the use of certain technologies provides previously unconsidered scenarios, it does not fundamentally change the applicable law

The blurring of lines between war and peace creates obvious challenges for International Humanitarian Law (IHL), which are the laws of armed conflict. For example, the technical capacity to project force beyond the battlespace from afar through the use of Unmanned Aerial Vehicles (UAVs), creates a challenge for interpreting and applying IHL, particularly its temporal and geographic scope.

Second, and closely linked: The interaction between technologies is arguably ever more security-relevant but insufficiently appreciated due to the institutional, academic and conceptual stove-piping, which does not create natural spaces to explore these interconnections. This pertains to both the combination of old and new technologies (e.g. nuclear and AI) or two independently developed new technologies (e.g. biotech and cyber).

These technologies are typically dealt with in different departments within ministries; at different university faculties where career paths and course structures tend to discourage interdisciplinarity; and through different treaties and corresponding meetings of states parties and expert communities.

Nuclear risk through the exploitation of cyber vulnerabilities or misinformation can result in accidents or inadvertent escalation

The conceptual, legal and institutional ‘boxes’ created – notably along nuclear, biological, chemical, conventional end-uses – structure competences in ministries as much as agendas in international organisations. However, such stovepipes ignore interlinkages such as nuclear-conventional entanglement and nuclear risks through cyber vulnerabilities. As such they do not correspond to technological realities.

Nuclear risk through the exploitation of cyber vulnerabilities or misinformation can result in accidents or inadvertent escalation.

Third, the diffusion of technology across sectors, both geographical and in terms of numbers and types of actors involved, has increased. Key technological developments take place in the private sector and academia in an increasing number of countries. Additionally, DIY (do-it-yourself) IT and bio-communities are becoming increasingly important actors.

This means that more and different types of stakeholders have to be engaged in understanding threats and risks, and in the upper echelon of arms control through preventive governance mechanisms. The dual-use nature of technology also means that concerns about arms control approaches to technology that have a chilling effect on peaceful research activities need to be addressed.

Fourth, it is often said that the pace of technological change has increased, but the impact of this is both over- and underestimated. Perhaps more important is that the nature of machine-human interaction has become a key issue in arms control discussions regarding the desirable and the possible role of humans in warfare.

This raises not only issues of human control, but also of bias in designing and training AI as well as the reliability and predictability of technology due to a lack of transparency in how complex software works.

Provocatively, one could speak of the 'illusion of human control' when the amount or complexity of information received, combined with the small time window for human decision, does not allow for a well-thought through response.

A fifth and last aspect that must not be forgotten is the role technology can and might play in strengthening arms control. Examples that have been highlighted include verification technologies such as satellite imagery and drones surveillance, as well as the potential use of block-chain in export control.

Adjusting existing regimes

This leads to the question of whether existing governance mechanisms, including arms control regimes, are sufficient to capture new technologies. An appropriate response that considers the characteristics outlined above requires both new regimes and adjustments to current regimes.

Let us take the example of dual-use export control, which seeks to capture transfers of technologies with military and civilian applications. Here, the increase of intangible electronic transfers requires fundamental changes to enforcement and compliance approaches and tools.

Decompartmentalising institutions and approaches requires overcoming inert resistance to change and finding a common language

Adjusting existing arms control regimes will not only require the active involvement of a much broader range of stakeholders (such as the private sector and academia), but creative, outside-the-box thinking to find ways that address challenges in their complexity.

Decompartmentalising institutions and approaches requires overcoming inert resistance to change and finding a common language between policy makers, technology specialists, lawyers, and the different scientific disciplines.

New approaches to arms control

How could new arms control regimes be devised in order to cover new developments? One way to bridge the traditional stove-piping could be a cross-cutting approach that does not choose a specific weapon system or technology as the organising principle. Rather, it would be based on a common paradigm such as the protection of civilians – rooted in international law – or human control.

An interesting mechanism already used to anticipate yet-to be discovered or militarily applied technologies has been standard practice in export controls for decades: the so-called 'catch-all mechanism'. The idea behind this concept is to avoid unnecessary controls of technologies with widespread civilian uses, while creating a legal mechanism to prohibit exports in case of suspicion or knowledge of an undesirable end-use.

In the case of export controls, end-use defined as undesirable mostly pertains to chemical, biological or nuclear weapons systems, or their delivery systems. Similar controls for end-use in connection with human rights abuses has also been discussed at the European Union level, but is subject to considerable controversy and to date not agreed.

Perhaps an end-use focused approach could inspire other regimes, and agreement might be found on similar controls based on an agreed undesirability of autonomous weapons that are beyond any form of human control.

Other upstream components of arms control are soft measures such as codes of conduct for scientists, communities of DIY researchers and enterprises.

Finally, unilateral constraints based on legal reviews of IHL could also be considered part of an upstream arms control category.

The way forward

Much policy attention currently focuses on what has been termed the 'crisis of multilateralism'. This makes it ever more important to identify mutual interests in arms control approaches. Vulnerabilities to terrorists, cyber vulnerabilities, and the risk of accidents and miscalculation could be building blocks for common ground.

Technology is further compressing the time window for humans to collect, analyse, and process information

The time window for decision-making on how to react in case of a – or an assumed - nuclear attack was arguably always too small for thorough reflection. Now technology is further compressing the time window for humans to collect, analyse, and process information. Decisions are likely to be more strategic than tactical and also of a more general nature and less context-specific, with fewer opportunities to cancel the use of force.

One element of effective crisis management will thus require finding a way to enlarge the window for human intervention in a form of Entschleunigung ("slowing down”). Various proposals to this end have been put forward, such as a ban on nuclear-tipped cruise missiles.

New approaches will also require critical reflection on the terminology that is used, since the continued use of outdated or incorrect concepts does not facilitate fresh thinking.

Additionally, arms control cannot be detached from specific contexts and perceptions. Understanding threat perceptions and regional dynamics are thus crucial framing elements. A strengthening of strategic empathy – the ability to put yourself in the other’s shoes – cannot be taken forward without regional security dialogues at many levels and involving different types of actors.

Among the features of effective arms control are the very powerful and more-than-ever-needed aspects of confidence building and strategic empathy

Valuing existing regimes will also be a key element. Among the features of effective arms control are the very powerful and more-than-ever-needed aspects of confidence building and strategic empathy. Traditional arms control agreements have played a crucial role in building trust, as the human factor of verification is important.

This is even more important given often-referenced perceptions of increased unpredictability and instability. They also constitute channels of communication that keep functioning when high-level policy dialogue breaks down.

The pace, nature and agents of technological advances fundamentally alter the landscape arms control seeks to govern, and thus requires a structural reconceptualization of the way we think about and approach arms control. Preventive, upstream approaches and a renewed attention to trust building and strategic empathy will be key to finding a way forward.

0 Reacties

Reactie toevoegen